← Back to Blog

Data Visualization

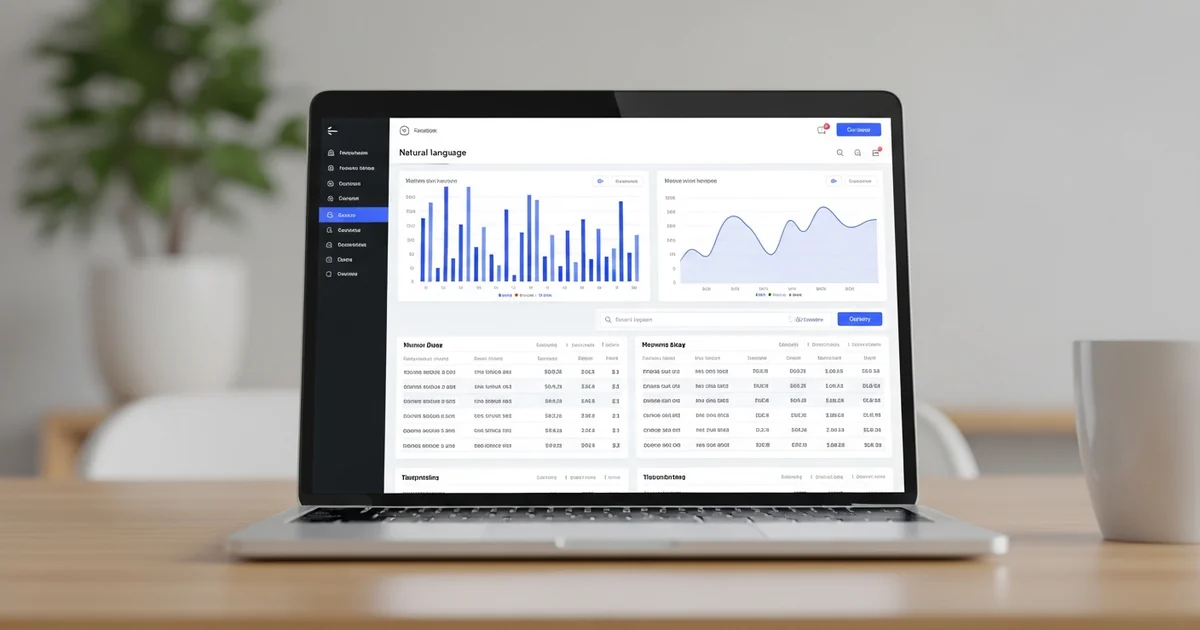

Online AI System to Analyze Data: Upload, Ask, Get Cited Answers

Zedly AI Editorial Team

February 28, 2026

11 min read

When people search for an "online AI system to analyze data," they want something specific: upload a file, ask a question in plain English, and get an answer they can verify. Not a dashboard they have to build. Not a BI tool they have to configure. Not a Python notebook they have to learn. An analyst in a browser, ready in seconds.

These tools exist now, and they are genuinely useful. They also vary wildly in reliability, privacy practices, and what "analysis" actually means under the hood. Some generate text that sounds right but cites no source. Others run real code against your data and show every step.

This guide covers what these systems actually do, how they work, what to check before trusting one with your data, and how to test any platform in 30 minutes.

What an Online AI System to Analyze Data Actually Is

Strip away the marketing and the workflow is the same across every tool in this category:

- Connect or upload data. CSV, Excel, PDF, database export, or a direct connector to your source system.

- Ask a question in natural language. "What was our top-selling product last quarter?" or "Show me expense anomalies above $5,000."

- The system runs analysis. Under the hood, most tools convert your question into executable code (Python, R, or SQL), run it against your data, and return tables, charts, and a narrative summary.

- You review and export. Download charts, export structured data (CSV, Excel), or share a summary.

This is what separates these tools from general-purpose chatbots. ChatGPT can discuss your data if you paste it into the chat window. An AI data analysis system connects to your actual files, runs real computations, and (ideally) shows you how it arrived at every number.

Four capability tiers to watch for:

Tier 1: Q&A over a single file. Upload one CSV, ask questions, get answers. Most tools start here.

Tier 2: Multi-file and multi-source joins. Upload an invoice log, a purchase order file, and a shipment tracker; ask questions across all three.

Tier 3: Reproducible analysis. The system shows the code it ran, lets you re-run the same query and get consistent outputs, and supports export of both results and methodology.

Tier 4: Governed enterprise system. Retention controls, role-based access, audit trails, and configurable data residency.

Most tools market themselves at Tier 3 or 4 but actually operate at Tier 1 or 2. The evaluation checklist later in this article helps you tell the difference.

How It Works Under the Hood

Buyers increasingly want to understand what happens between "ask a question" and "get an answer." Here is the typical pipeline, and what to look for at each stage.

Ingest

You upload a CSV, Excel workbook, or PDF. The system detects the schema: column names, data types, relationships between tables (if you uploaded multiple sheets or files). Good platforms handle messy inputs automatically: inconsistent date formats, mixed numeric and text columns, leading whitespace, and duplicate headers.

For a detailed look at what automatic schema detection produces, see how the spend analysis pipeline profiles a 12-sheet workbook and detects semantic roles, foreign keys, and anomaly flags without any configuration.

Analyze

The AI converts your question into a plan, typically Python or SQL, and executes it in a sandboxed environment. The sandbox is important: it means your code runs in an isolated container, not on shared infrastructure where other users' data could be accessible. The system returns tables, charts, and narrative explanation.

The quality difference between platforms shows up here. Some tools generate code from scratch for every question, which means the same question can produce different code paths (and different results) on consecutive runs. Better platforms route questions to deterministic analysis skills: the same question on the same data always triggers the same computation. For an example of what generated charts look like in practice, see this dual-axis chart built from a single prompt across three Excel sheets.

Explain

This is where most tools fall short. Generating an answer is one thing; showing how the answer was derived is another. Look for:

- Computation traces: A step-by-step log of every operation (table loads, joins, filters, groupbys, calculations) that produced the result. See a close-up of a computation trace panel in the spend analysis walkthrough.

- Code visibility: Can you see the Python or SQL that ran? Can you modify it?

- Citations for document-based answers: When answering from PDFs or contracts, the system should cite page numbers and section references, not just generate plausible-sounding text.

Export

Common expectations: download charts as images, export result tables as CSV or Excel, and generate a shareable summary or report. Some platforms also support direct export to accounting software (QBO, QFX) or integration with downstream tools. If you regularly need to categorize and chart transaction data, check whether the export formats match your existing workflow.

The Buyer's Evaluation Checklist

Use this checklist when comparing platforms. These are the dimensions where tools in this category diverge most, and where vendor marketing is least reliable.

- Accuracy and repeatability: Can the system show the code or steps it used? Can you re-run the same query on the same data and get the same output? If the answer to either is no, the system is generating text, not running analysis. Ask for a computation trace or execution log.

- Security and privacy: Encryption in transit (TLS) and at rest (AES-256) is table stakes. The real questions: Is your data used to train models? What is the retention policy? Is processing ephemeral (containers destroyed after each session) or persistent? Who operates the storage infrastructure? For sensitive business data, platforms that store files on independent infrastructure with configurable retention offer stronger control than those running on hyperscaler shared tenancy.

- Governance options: For regulated or sensitive data, you may need control over where inference happens (cloud, VPC, or on-premise). Role-based access, workspace-level policies, and data lineage matter at scale. If your data sensitivity requires it, look for platforms that offer tiered deployment models.

- Cost predictability: Pricing models vary: usage-based (per query, per compute minute), seat-based (per user per month), or hybrid. Understand what drives cost: sandbox compute time, storage volume, number of files, or query count. Some platforms charge separately for storage retention beyond a default window.

Common Use Cases

These are the scenarios where an online AI data analysis system consistently outperforms spreadsheets and traditional BI tools.

Finance: Expense Analysis and Cash Flow

Upload a bank export or AP ledger, ask "show me expense anomalies by vendor and month," and get a breakdown with flagged outliers. No pivot tables, no formula chains. For a full walkthrough of this pattern on a 50,000-row dataset with merchant-level anomaly drivers and KPI dashboards, see the AI spend anomaly detection guide.

Operations: Inventory, Sales, and Lead Times

Cross-file analysis shines here. Upload an inventory snapshot, a sales report, and a supplier lead time table. Ask "which SKUs have less than 2 weeks of inventory based on current sell-through rate?" The system joins the files, computes the metric, and flags the results.

Revenue Operations: Pipeline and Churn

Export your CRM pipeline and ask "show me deals that have been in the same stage for more than 30 days, grouped by rep." Or upload renewal data and ask "which accounts have declining usage in the last 3 months?" These are questions that live in dashboards nobody checks; asking them directly is faster.

Legal and Compliance

Upload exported logs, audit reports, or regulatory filings. Ask questions and get cited answers with page references. This pattern works for internal audits, compliance reviews, and due diligence workflows where the answer needs to trace back to a specific document and paragraph.

Healthcare and Research: Dataset Exploration

Upload a research dataset (de-identified patient records, lab results, survey responses) and run exploratory analysis: distributions, correlations, missing value patterns, and outlier detection. The system handles the statistics; you focus on the interpretation. For a step-by-step guide to profiling a CSV before analysis, including missing values, duplicates, and outlier flagging, see the data profiling walkthrough.

Test Any AI Data Analyst in 30 Minutes

Before committing to a platform, run this evaluation. It takes 30 minutes and tests the dimensions that matter most.

-

Upload a messy dataset. Use a real file, not a demo dataset. Pick one with missing values, inconsistent date formats, and at least two sheets or tables. If the system cannot handle ingestion cleanly, nothing else matters.

-

Ask 10 standard questions. Cover the basics: trends over time, top N rankings, segment breakdowns, outlier detection, and simple aggregations. Check whether the answers are plausible and whether the system explains its methodology.

-

Ask 3 audit questions. These test reliability:

- "Show me the code you ran to produce that result."

- "Which rows in the source data drove that number?"

- "Re-run the same query. Did you get the same result?"

If the system cannot answer these, it is generating text, not running analysis.

-

Export a result. Download a chart and a CSV. Check that the exported data matches what was shown on screen. Look for formatting issues, missing columns, or truncated rows.

-

Verify the retention policy. Delete your uploaded file. Confirm it is gone. Check whether session data (embeddings, cached results) is also cleared. Read the data processing terms and confirm the retention window matches what the marketing page claims.

Any platform that passes all five steps is worth a deeper evaluation. Any platform that fails on steps 3 or 5 is a risk for serious data work.

Frequently Asked Questions

What data formats can an online AI data analysis system handle?

Most systems accept CSV and Excel files (XLSX, XLS). Better platforms also handle PDFs, Word documents, and multi-sheet workbooks with automatic schema detection. Look for support for messy real-world files: inconsistent date formats, missing values, mixed data types in the same column, and multi-sheet joins. If the system only accepts perfectly clean single-table CSVs, it will not survive contact with actual business data.

Is it safe to upload sensitive business data?

That depends entirely on the platform. Key questions: Does it use your data to train models? How long does it retain your files? Is processing ephemeral (destroyed after each session) or persistent? Who operates the storage infrastructure? Platforms that process in single-use containers and store files on independent infrastructure (not hyperscaler shared tenancy) offer stronger isolation. Always check the vendor's data processing agreement and retention policy before uploading anything sensitive. For a detailed breakdown of the upload lifecycle (transit, processing, storage, deletion), see our guide on private AI to upload documents at /blog/private-ai-to-upload-documents.

Does it generate charts automatically?

Yes, most AI data analysis tools generate charts from natural language prompts. You describe the chart you want ('bar chart of revenue by quarter,' 'scatter plot of price vs volume') and the system writes the code, runs it, and returns the visualization. The quality varies: some systems produce basic matplotlib output, while others generate polished, presentation-ready charts with proper axis labels, legends, and color schemes.

Can it analyze multiple files at once?

This is where tools differ significantly. Basic tools handle one file per session. More capable platforms let you upload multiple files and ask cross-file questions: 'Compare Q1 invoices against the purchase order log and flag mismatches.' Cross-file analysis requires the system to detect relationships between tables (shared keys, matching date ranges) and join them correctly. Ask specifically about multi-file support during evaluation.

Can I control retention and delete everything on demand?

Not all platforms offer this. Some retain your data for a fixed window (often 30 days of inactivity) or extend retention for paid tiers. Look for platforms that let you delete files immediately, clear session data after analysis, and configure retention policies. For regulated industries (healthcare, finance, legal), configurable retention is not optional; it is a compliance requirement.

How is this different from using ChatGPT on a spreadsheet?

ChatGPT can answer questions about data you paste into the chat, but it has significant limitations for serious analysis. It hallucinates numbers, cannot run code against your actual data (without Code Interpreter), has context window limits that truncate large files, and does not provide computation traces showing how results were derived. Purpose-built data analysis tools run real Python or SQL against your full dataset, validate their outputs, and show the exact code path that produced each number.

Choosing the Right Platform

If your data is non-sensitive and you need quick answers, most "chat with your data" tools will work. Upload a CSV, ask a question, get a chart. The barrier to entry is low and the results are useful for exploratory work.

If your data is sensitive, regulated, or business-critical, the evaluation changes. Retention controls, ephemeral processing, independent storage infrastructure, and auditable computation traces move from "nice to have" to requirements. The 30-minute test above will separate platforms that meet these standards from those that only claim to.

Zedly processes data in single-use sandboxes (destroyed after each session), stores files on independent infrastructure (Backblaze B2, not AWS/Azure/Google), and provides computation traces showing every step of the analysis. For sensitive business data where auditability and privacy matter, that combination is the point.

Try it with your own data.

Upload a spreadsheet, ask a question, and see the computation trace. Ephemeral processing, cited answers, and full export. Start with a sample dataset or request the security pack.

Ready to get started?

Transform spreadsheets into insights. No code required.